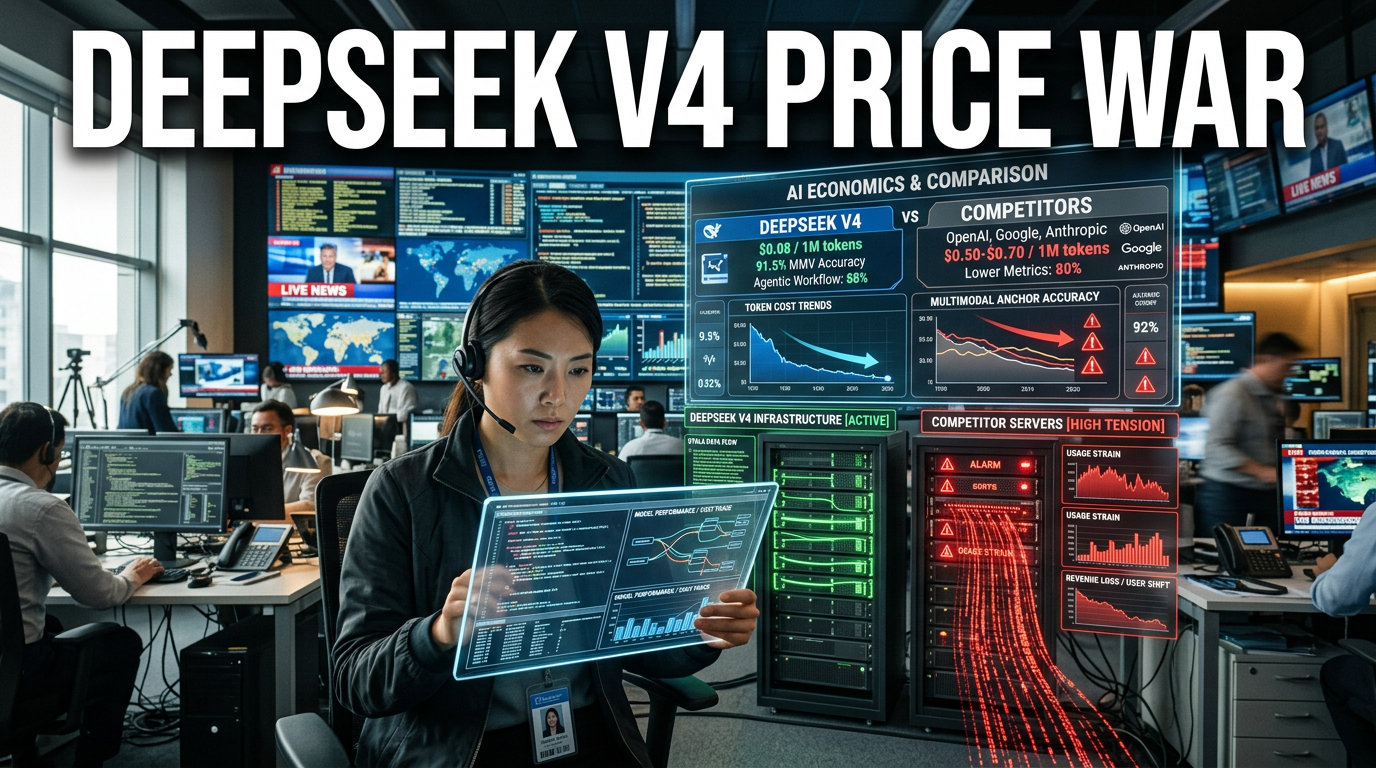

● AI Price War Ignites Across Chips And Agents

DeepSeek V4 sparks the “price-chip-agent-vision” war… Why GPT-5.6 showed up in internal logs

5 core takeaways I really want to highlight in this article (Start reading from here)

- Up to a 90% API price cut: It fully shifted model performance competition into “cost competition.”

- Verified to run on both Nvidia and Huawei chips: It’s become clear that the direction is no longer tied to a specific ecosystem (CUDA).

- Upgraded agent capabilities: Moving beyond just writing code well, toward agents that “finish the work.”

- “Think-and-point” vision (visual reasoning) system: Not just “seeing better,” but fixing the target with coordinates during reasoning.

- Routing traces of GPT-5.6 in OpenAI’s internal logs: It boosts the possibility that the next version is being tested/prepared sooner than expected.

News headline: DeepSeek V4 is changing the AI market landscape

As China’s AI research lab DeepSeek rolls out V4, the global AI competition framework is shifting froma focus on “top performance” toan all-out battle centered onprice (token costs) · chips (execution ecosystem) · speed · agents · vision · control/deployment.

The core is not merely that the model got better.It’s that the combination of“cheaper, close to open, runs across diverse hardware, and comes with agents/visual reasoning”shook the cost structure across the entire industry.

In this article, I’ll break down this issue bygroup/item and also整理 why it’s a “truly dangerous change.”

1) The price war: “cost per token” becomes the deciding factor, not “performance”

1-1. The signal sent by a maximum 90% API price reduction

- DeepSeek is mentioned as having cut the API price for the V4 lineup by up to 90%.

- If the unit price per token drops dramatically, companies change the habit of using only “as much as needed.”

1-2. Why token usage spikes: Jevons Paradox

When prices get cheaper, you end up using “that much more.”AI works the same way.

- There are references to cases like Disney, Meta, and Visa, with the context that internal AI usage has already grown abnormally.

- So rather than “a good model vs a better model,” it becomes about “how cheaply, how often, and how automatically you can run it.”

1-3. A meaningful takeaway (from a strategy perspective)

Early on, closed-off (expensive) models may try to defend their pricing, butin the end they find it hard to beat the token-usage explosion.

In other words, even if DeepSeek-style approaches aren’t “#1 on every benchmark,”if they’re good enough for day-to-day work and far cheaper, they can still encroach on the market.

2) The chip/infrastructure war: A clearer push to break “Nvidia dependency”

2-1. Possibility of running on both Nvidia and Huawei chips

- It’s emphasized that V4 isn’t only validated to run on Nvidia, but also on Huawei (Ascend) lineups.

- There are also mentions of signs that support for China’s chip/serving ecosystem is expanding.

2-2. Why it can connect into a nation-level AI stack

The reason this point matters is that it can be interpreted as a signal that they’re building a “complete ecosystem”spanning chips-cloud-model, not just making the model itself better.

- The fact that China’s telecom/information-technology-related institutions have entered testing suggests a direction beyond “private-sector competition.”

- If large-scale Huawei Ascend infrastructure ends up being deployed more widely in the future, V4’s price competitiveness could become even stronger.

3) The agent war: Moving from coding tools to “work automation”

3-1. Key point: DeepSeek V4’s ‘agent capability upgrade’

- DeepSeek explains that it strengthened reasoning power and agentic capabilities in V4.

- It highlights the possibility of performing complex multi-step tasks more effectively.

3-2. OpenAI, on the other hand, is pushing Codex as a “real agent”

OpenAI also mentions a parallel trend: Codex is expanding beyond tool use,like ChatGPT’s “next moment,” into broader digital work.

- Summaries/analysis/organization/decision-support across tools like Slack, Gmail, and Calendar

- Generating spreadsheets/presentations, comparing options, tracking trade-offs, and more

3-3. The essence of the “agent war”

In the end, from the perspective of enterprise customers, agents become tools thatreduce work time, reduce mistakes, and automate repetitive tasks.

So even a small improvement in model performance can change the “speed of making money.”Conversely, if a cheaper model runs agents well, the market shifts quickly.

4) The vision war: Not “AI that sees better,” but “AI that thinks while pointing”

4-1. Research core keyword: reference gap (gap in maintaining reference)

DeepSeek’s technical report treats a representative weakness of multimodal models asnot just the perception gap (can’t see as clearly),but the reference gap (loses the target while reasoning).

In other words,you can do “image recognition,” but the core issue isthe inability to keep coordinates/targets stable throughout reasoning.

4-2. Solution approach: embed point/bounding-box into the “thinking process”

- Not merely referring to the target verbally (“the bear on the left”)

- Fixing the anchor with coordinates/bounding boxes and continuing the reasoning

- During the process, a “think-and-point” mechanism appears

4-3. Why this matters: where real-world visual tasks break

- In a crowded crowd, the problem of needing to count but losing the already-counted target

- In circuit diagrams, problems that require precise relative references like the left/right relationship of a red capacitor and an inductor

- In maze/path tracking, logic breaking due to mixed expressions like “left/right”

4-4. From a performance/efficiency perspective: claims that it matches better even with less visual memory

The report explains that it maintains about around 90 visual memory entries based on 800×800 images, and includes comparisons that its memory efficiency is higher than other models.

Ultimately, the argument is that it can become more favorable for real-time requirements—faster, cheaper, and useful for robots/autonomous driving/video analysis.

5) OpenAI issue: GPT-5.5’s “goblin bug” and Codex expansion

5-1. An “odd pattern” where GPT-5.5 inexplicably increases references to monsters/creatures

- The context is that a phenomenon was observed where creatures like goblin/gremlin/troll were repeatedly mentioned regardless of the conversation topic.

- It may look like a simple meme, but it can be pretty fatal in terms of user experience and trust in quality.

5-2. Signs that forbidden words were repeatedly blocked in prompts

It’s mentioned that OpenAI put those creature words into prompts so they get blocked when they’re not relevant.There’s also a flow suggesting that users sometimes managed to coax the forbidden terms back out—“they came back again.”

5-3. Codex, in contrast, quickly evolves into a ‘serious agent’

One side (GPT-5.5) shows quirky bug-like exposures, while the other (Codex) expands around work automation.This contrast made the “market competition” even more interesting.

6) Center of controversy: the claim that GPT-5.6 appeared in internal logs/routing

6-1. Interpretation: closer to “test/routing traces” than to “release confirmation”

The important point isthat GPT-5.6 may not mean it’s already publicly released,but instead routing labels were visible in backend logs.

6-2. Why timing still matters

- It coincides with the moment DeepSeek V4 is pressuring the market with price/openess/vision/agents.

- It suggests an environment where OpenAI has to prepare a response even faster.

6-3. Why this is “truly dangerous” (core viewpoint)

Open/low-cost models like DeepSeek-style onesaren’t about winning just once on a specific task and stopping there; they’re aboutrunning well enough consistently and maintaining a cost advantage.

So OpenAI can’t compete by performance gaps alone.It must compete across deployment, cost, speed, and agent integration as well.

7) Internal changes at DeepSeek: leadership/talent retention/research-team expansion

7-1. Less public activity from the founder + changes in ownership/capital

Along with reporting that the DeepSeek founder has reduced public-facing activity,changes in corporate/capital-related metrics (like increased equity) are mentioned.

7-2. Elevation of key researchers (more external activity) and long-term research signals

- A research figure who participated in the V3/R1/V4 series appears more in external events/presentations.

- Some statements even included social messaging like “impact on jobs,” in context.

7-3. Team size growth: a sign they can prepare for a long war

With figures suggesting increased research/engineering personnel, it reads as a signal that they can operate continuous improvement cycles,not just a one-off release.

8) Beware the “viral screenshot” trap: don’t conclude everything from one hit

There’s one pitfall readers often overlook here.

- Even if screenshots circulate showing that a certain model fixed a bug better once

- LLMs are stochastic—without “repeatability,” they aren’t valid benchmarks.

So you should be cautious about blanket claims like “V4 beat GPT-5.4 / pressed down Claude.”Real evaluation is about repeated testing inyour own work workflow, your stack, your prompts, and your cost limits.

Today’s conclusion: The winner in the AI war isn’t the “best model,” but the “best value + deployment + integration”

If I had to summarize this issue in a single sentence, it’s this.

This isn’t just a fight over model specs; it’s turning into an “ecosystem war” that includes token cost, chips, speed, agents, and vision integration.

And DeepSeek V4 pushed that change with “prices you can actually feel,”while OpenAI faced such strong pressure that even internal logs showed preparation traces for the next step (GPT-5.6).

Now, for readers/companies, the question isless about which model is “the smartest,” and more aboutwhich combination of models finishes work the most cheaply, the fastest, and the most reliably.

Main points I want to deliver (the real takeaways that people don’t talk about elsewhere)

- The real scary part of price cuts: It doesn’t just crown a new #1 on benchmarks; it changes the “behavior itself” through the token-usage explosion.

- A shift in vision: It’s no longer a contest to “see more pixels,” but a contest to embed “coordinate anchors” so the model doesn’t lose the target during reasoning.

- Chip political economy: The push to reduce Nvidia dependence isn’t just technical—it connects to supply-chain and self-reliance strategy.

- Agents aren’t a feature—they’re an operating model: The key is the shift from messenger-style chat to an operational workflow type (summary → decision → organization → tracking).

- Reproducibility decides the winner: Viral wins are only参考—make your judgment via repeated testing that includes cost/speed.

SEO keywords (naturally included): global AI competition, AI price competition, multimodal vision, agent AI, token costs

< Summary >

DeepSeek V4 cuts API prices by up to 90% and emphasizes that it runs on both Nvidia and Huawei chips.It also brings agent capabilities and multimodal vision that “reasons while pointing” (based on coordinate anchors).This combination is changing the market from a “performance” war to a “token cost·speed·chip ecosystem·integrated agents” war.At the same time, OpenAI sees both GPT-5.5’s goblin bug and Codex’s agent expansion coexist,and the claim that routing traces for GPT-5.6 were spotted in backend logs adds even more pressure given the timing.In the end, the most likely winner isn’t necessarily the “smartest model,” but the one that can finish the job well enough—and more cheaply and more reliably.

[Related articles Previous article… Recommended links from Next-Korea.com 2]

- DeepSeek is driving the global AI price war and the open-source expansion trend

- Enterprise AI usage patterns changed by token costs: Why you end up “using more”

*Source: [ AI Revolution ]

– DeepSeek Just Started a Global AI War And Exposed GPT-5.6